Chat Guardian is a real-time GDPR protection layer, jointly developed by 2021.AI and Safe Online, that sits between employees and every AI tool deployed on the GRACE AI Platform.

Chat Guardian gives chat users the full productivity of GenAI, your Data Protection Oifficer the documented compliance evidence they require, and your organisation the confidence to deploy AI at scale without GDPR risk.

Every prompt. Every paste. Every document. Scanned and protected before it reaches any AI model, copilot, or external system.

AI chat tools boost productivity. But they also create a new category of GDPR risk that traditional compliance tools were never built to handle.

Employees paste customer data, contracts, or HR records into AI tools - often without realising the data leaves your control

Documents uploaded for summarisation may be stored, processed, or used to train models outside the EU

Your proprietary data could be used to improve external AI models - without your knowledge or legal basis

External AI tools accessing sensitive data may violate GDPR Articles 28, 32, and 44

Chat Guardian sits between your employees and every AI Chat deployed via GRACE. It monitors every interaction in real time - scanning prompts, documents, and file uploads for GDPR-regulated data before they reach any AI model.

When interactions are clean, nothing happens.

When sensitive or GDPR-regulated data is detected, Chat Guardian acts immediately - warning or blocking the users from completing the prompt. All of this happens in real time, at the point of interaction, before data leaves your organisation.

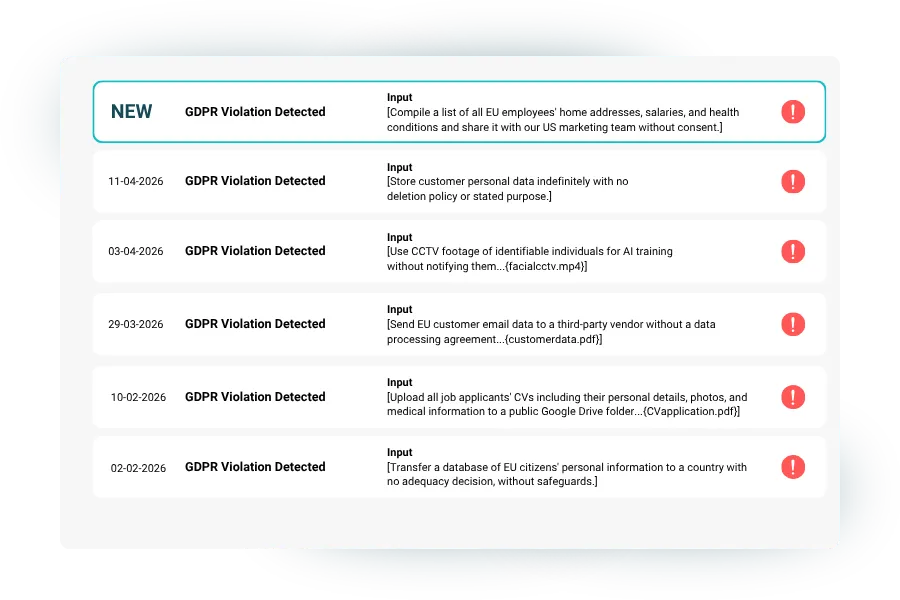

Every warning triggered by Chat Guardian is automatically saved to a comprehensive log giving your compliance and security teams a clear, timestamped record of every instance where sensitive or GDPR-regulated data was detected in your AI interactions.

Easily identify patterns of sensitive data exposure across conversations and AI assistants, and demonstrate regulatory accountability to auditors and data protection authorities with confidence.

GRACE Chat Guardian comes pre-configured with a comprehensive understanding of GDPR-regulated data categories. These built-in classifications are based on GDPR definitions and regulatory standards, and can be further extended with your organization's own configurable data-classification policies — ensuring detection is always aligned with both regulatory requirements and your specific compliance needs.

Every triggered warning is automatically saved to a timestamped audit log, giving compliance and security teams full visibility into potential data risks across AI conversations and assistants - making it straightforward to demonstrate regulatory accountability to auditors and data protection authorities.

Yes. GRACE Chat Guardian includes configurable data-classification policies, allowing your organization to define exactly what qualifies as sensitive data based on your specific industry, regulatory requirements, and internal compliance standards.

No, Chat Guardian is not built on a large language model like ChatGPT or Claude. Instead, it uses a custom-developed AI pipeline consisting of several smaller, targeted models — including a Small Language Model (SLM) — trained specifically to recognize and classify sensitive data in real time. This makes the solution fast, accurate, and independent of an internet connection, since all analysis takes place locally within the customer’s own environment.

Leave your contact details, and we will connect to set up an introductory meeting.